# Beyond Legal Headaches: Why the FTC’s Noncompete Warning Is an AI Imperative for Pharma

A recent advisory from the U.S. Federal Trade Commission has sent a clear signal to the healthcare industry: broad noncompete agreements for clinical staff may be on legally shaky ground. On the surface, this is a labor and legal issue, promising greater mobility for physicians and nurses. But dig a little deeper, and you’ll find a profound, latent challenge for one of the most data-sensitive operations in the world: the clinical trial.

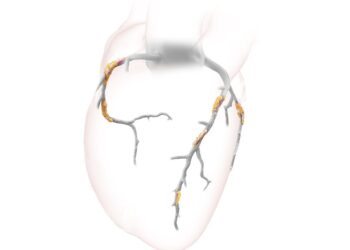

The immediate consequence of this regulatory pressure is talent churn. For a hospital, losing a cardiologist is a staffing problem. For a pharmaceutical company running a multi-year, multi-site oncology trial, losing that same cardiologist—who also happens to be a Principal Investigator (PI)—can be a data integrity crisis.

This is where the conversation must shift from human resources to artificial intelligence.

### The Human Element as a Data-Quality Bottleneck

In the world of clinical research, we often treat data as an objective, pristine output. It isn’t. A significant portion of clinical trial data, especially for endpoints related to quality of life, side effect severity, or tumor response assessment, is filtered through the subjective interpretation of a human expert. A physician’s assessment of a rash as “mild” versus “moderate” or a patient’s reported pain on a 1-10 scale is captured and codified as a hard data point.

This system works, albeit imperfectly, because of consistency. Over time, an investigator and their clinical team develop an internal, often unspoken, calibration for these subjective measures. They see the same patients repeatedly, building a deep longitudinal understanding that informs their data entry.

Now, introduce instability.

When a key physician or specialist nurse leaves mid-trial and is replaced, you don’t just swap one person for another. You introduce a new variable into your data collection model. The new investigator, however skilled, will have a slightly different baseline for what constitutes a “Grade 2” adverse event versus a “Grade 3.” This variance, known as poor inter-rater reliability, introduces noise and bias into the dataset. Multiplied across multiple sites and over several years, this noise can be enough to confound trial results, obscure a genuine safety signal, or mask a drug’s efficacy.

The traditional solution—extensive retraining, monitoring, and source data verification—is slow, expensive, and reactive. We are, in effect, trying to manually patch a systemic vulnerability.

### Building Resilience with an AI Stability Layer

This is not a people problem; it’s a systems problem that AI is uniquely positioned to solve. Instead of simply reacting to staff turnover, we can build more resilient data pipelines from the ground up. Here’s how:

* **AI-Powered Data Harmonization:** Machine learning models, particularly those using Natural Language Processing (NLP), can analyze clinical notes and structured data entries from different investigators over time. By learning the specific reporting patterns of each individual (“Dr. Smith consistently rates X as ‘mild,’ while Dr. Jones rates similar cases as ‘moderate'”), the system can identify and flag potential inconsistencies when a new investigator takes over. In advanced cases, it can even suggest normalized values to harmonize the dataset, mitigating the bias introduced by personnel changes.

* **Predictive Anomaly Detection:** We can deploy AI to act as an early warning system. An algorithm can monitor the data stream from each clinical site in real-time. If the statistical distribution of adverse event grades, patient-reported outcomes, or other key metrics suddenly shifts at a site immediately following a known staff change, the system can automatically alert trial managers. This allows for proactive intervention before the data quality degrades significantly.

* **Shifting to Objective Endpoints:** The ultimate solution is to reduce reliance on subjective human interpretation altogether. This is where AI and connected devices excel. Instead of relying solely on a physician’s assessment of a patient’s mobility, we can use wearable sensors to gather objective data on step count, gait, and activity levels. AI-powered imaging analysis can provide more consistent and quantifiable assessments of tumor size or skin lesion progression than the human eye alone. By designing trials that incorporate these objective, machine-readable endpoints from the start, we make the entire process less fragile and less susceptible to the “human factor” of staff turnover.

### A Forcing Function for Modernization

The FTC’s stance on noncompetes may seem like a distant regulatory issue to those of us focused on technology and data. It’s not. It is a powerful forcing function that exposes a fundamental weakness in how clinical trials are conducted.

Companies that view this simply as a challenge for their legal and HR departments will be perpetually on the back foot, reacting to disruptions. The forward-thinking organizations will recognize this as a catalyst to de-risk their most valuable assets: their clinical data. They will invest in the AI-driven tools and infrastructure needed to build trials that are resilient by design, ensuring that the integrity of their research remains robust, no matter who is holding the clipboard.

This post is based on the original article at https://www.bioworld.com/articles/724099-ftc-advises-health-care-entities-on-use-of-noncompete-agreements.